DAP2: OPeNDAP

DAP2 (Data Access Protocol version 2) is the core HTTP protocol used by OPeNDAP (Open-source Project for a Network Data Access Protocol) for data transport and access over HTTP. DAP is widely used in the scientific data community as a remote access protocol compatible with the NetCDF data model. DAP enables general-purpose remote access and subsetting of datasets and is widely supported by numerous geospatial and earth-science tools, including the NetCDF library itself.

References

These are links to the OPeNDAP standards and websites:

Arraylake implements the DAP2 standard.

Activating DAP2 for Arraylake Datasets

DAP2 can be activated using the Arraylake command line interface (CLI)

al compute enable {org} dap2

Arraylake currently only supports enabling DAP2 on an organization-wide basis. This is subject to change in future releases.

DAP2 URL Structure

DAP2 endpoints can be accessed via the following URL schema:

https://compute.earthmover.io/v1/services/dap2/{org}/{repo}/{branch|commit|tag}/{path/to/group}/opendap

Where

{org}is the name of your Arraylake organization{repo}is the name of the Repo{branch|commit|tag}is the branch, commit, or tag within the Repo to use to fulfill the request{path/to/group}is the path to group within the Repo that contains anxarrayDataset

This URL will be called {base_url} in the following examples.

All examples use the HTTP GET protocol.

Integrating with Tools

The DAP2 protocol can be used by a variety of tools to subset and load datasets from Arraylake over HTTP.

Specific examples in this guide will use the Arraylake GFS Repo. The underlying GFS dataset has the following structure:

<xarray.Dataset> Size: 131TB

Dimensions: (init_time: 7163,

lead_time: 209, latitude: 721,

longitude: 1440)

Coordinates:

* init_time (init_time) datetime64[ns] 57kB ...

expected_forecast_length (init_time) timedelta64[ns] 57kB dask.array<chunksize=(7163,), meta=np.ndarray>

ingested_forecast_length (init_time) timedelta64[ns] 57kB dask.array<chunksize=(7163,), meta=np.ndarray>

* lead_time (lead_time) timedelta64[ns] 2kB ...

valid_time (init_time, lead_time) datetime64[ns] 12MB dask.array<chunksize=(7163, 209), meta=np.ndarray>

* latitude (latitude) float64 6kB 90.0 ....

* longitude (longitude) float64 12kB -180...

spatial_ref int64 8B ...

Data variables: (12/21)

categorical_freezing_rain_surface (init_time, lead_time, latitude, longitude) float32 6TB dask.array<chunksize=(1, 105, 121, 121), meta=np.ndarray>

categorical_snow_surface (init_time, lead_time, latitude, longitude) float32 6TB dask.array<chunksize=(1, 105, 121, 121), meta=np.ndarray>

categorical_ice_pellets_surface (init_time, lead_time, latitude, longitude) float32 6TB dask.array<chunksize=(1, 105, 121, 121), meta=np.ndarray>

downward_long_wave_radiation_flux_surface (init_time, lead_time, latitude, longitude) float32 6TB dask.array<chunksize=(1, 105, 121, 121), meta=np.ndarray>

geopotential_height_cloud_ceiling (init_time, lead_time, latitude, longitude) float32 6TB dask.array<chunksize=(1, 105, 121, 121), meta=np.ndarray>

categorical_rain_surface (init_time, lead_time, latitude, longitude) float32 6TB dask.array<chunksize=(1, 105, 121, 121), meta=np.ndarray>

... ...

relative_humidity_2m (init_time, lead_time, latitude, longitude) float32 6TB dask.array<chunksize=(1, 105, 121, 121), meta=np.ndarray>

total_cloud_cover_atmosphere (init_time, lead_time, latitude, longitude) float32 6TB dask.array<chunksize=(1, 105, 121, 121), meta=np.ndarray>

wind_v_10m (init_time, lead_time, latitude, longitude) float32 6TB dask.array<chunksize=(1, 105, 121, 121), meta=np.ndarray>

wind_u_10m (init_time, lead_time, latitude, longitude) float32 6TB dask.array<chunksize=(1, 105, 121, 121), meta=np.ndarray>

wind_v_100m (init_time, lead_time, latitude, longitude) float32 6TB dask.array<chunksize=(1, 105, 121, 121), meta=np.ndarray>

wind_u_100m (init_time, lead_time, latitude, longitude) float32 6TB dask.array<chunksize=(1, 105, 121, 121), meta=np.ndarray>

Attributes:

dataset_id: noaa-gfs-forecast

dataset_version: 0.2.7

name: NOAA GFS forecast

description: Weather forecasts from the Global Forecast System (...

attribution: NOAA NWS NCEP GFS data processed by dynamical.org f...

spatial_domain: Global

spatial_resolution: 0.25 degrees (~20km)

time_domain: Forecasts initialized 2021-05-01 00:00:00 UTC to Pr...

time_resolution: Forecasts initialized every 6 hours

forecast_domain: Forecast lead time 0-384 hours (0-16 days) ahead

forecast_resolution: Forecast step 0-120 hours: hourly, 123-384 hours: 3...

NetCDF Library

The NetCDF C library has built-in support for DAP2. Any application which uses the NetCDF C library should be able to connect to this service.

For example, the ncdump command line utility can be used to extract and export data.

ncdump -h https://compute.earthmover.io/v1/services/dap2/earthmover-public/noaa-gfs-forecast/main/opendap

Subsetting and Exporting

The nco toolkit is a suite of tools for manipulating and analyzing data stored in netCDF format. The ncks (netCDF Kitchen Sink) tool can be used to download a subset of the GFS dataset from Arraylake in NetCDF format. For example, this command will download only the 2 Meter Temperature variable at the first timestep of the first available model run, where the latitude is between 10 and 20 degrees, and where the longitude is between 30 and 40 degrees.

ncks -v t2m \

-d step,0 \

-d time,0 \

-d latitude,10.0,20.0 \

-d longitude,30.0,40.0 \

https://compute.earthmover.io/v1/services/dap2/earthmover-public/noaa-gfs-forecast/main/opendap \

subset.nc

When finished the resulting netcdf file is only 12 KB on disk! Taking a look with ncdump we can see the coordinates of our downloaded subset and confirm they match our request:

ncump subset.nc -h -c

# netcdf output {

# dimensions:

# latitude = 41 ;

# longitude = 41 ;

# step = 1 ;

# time = 1 ;

# variables:

# ...

# float t2m(longitude, latitude, time, step) ;

# t2m:GRIB_NV = 0 ;

# t2m:GRIB_Nx = 1440 ;

# t2m:GRIB_Ny = 721 ;

# t2m:GRIB_cfName = "air_temperature" ;

# t2m:GRIB_cfVarName = "t2m" ;

#

# ...

# latitude = 20, 19.75, 19.5, 19.25, 19, 18.75, 18.5, 18.25, 18, 17.75, 17.5,

# 17.25, 17, 16.75, 16.5, 16.25, 16, 15.75, 15.5, 15.25, 15, 14.75, 14.5,

# 14.25, 14, 13.75, 13.5, 13.25, 13, 12.75, 12.5, 12.25, 12, 11.75, 11.5,

# 11.25, 11, 10.75, 10.5, 10.25, 10 ;

#

# longitude = 30, 30.25, 30.5, 30.75, 31, 31.25, 31.5, 31.75, 32, 32.25, 32.5,

# 32.75, 33, 33.25, 33.5, 33.75, 34, 34.25, 34.5, 34.75, 35, 35.25, 35.5,

# 35.75, 36, 36.25, 36.5, 36.75, 37, 37.25, 37.5, 37.75, 38, 38.25, 38.5,

# 38.75, 39, 39.25, 39.5, 39.75, 40 ;

#

# step = 0 ;

#

# time = 0 ;

Xarray

Xarray includes support for loading datasets via DAP. We can try it out using the GFS dataset above:

import xarray as xr

ds = xr.open_dataset("https://compute.earthmover.io/v1/services/dap2/earthmover-public/noaa-gfs-forecast/main/opendap")

ds

# <xarray.Dataset> Size: 5TB

# Dimensions: (step: 209, latitude: 721, longitude: 1440, time: 1138)

# Coordinates:

# * step (step) timedelta64[ns] 2kB 00:00:00 01:00:00 ... 16 days 00:00:00

# * latitude (latitude) float64 6kB 90.0 89.75 89.5 ... -89.5 -89.75 -90.0

# * longitude (longitude) float64 12kB 0.0 0.25 0.5 0.75 ... 359.2 359.5 359.8

# * time (time) datetime64[ns] 9kB 2024-05-12T18:00:00 ... 2025-02-21

# Data variables:

# prate (longitude, latitude, time, step) float32 988GB ...

# tcc (longitude, latitude, time, step) float32 988GB ...

# t2m (longitude, latitude, time, step) float32 988GB ...

# gust (longitude, latitude, time, step) float32 988GB ...

# r2 (longitude, latitude, time, step) float32 988GB ...

# Attributes:

# description: GFS data ingested for forecasting demo

This gives lazy read-only access to the dataset over HTTP, while still being able to use Xarray to perform analysis.

MATLAB

MATLAB has built-in support for reading data from OPeNDAP servers via its NetCDF functions. You can read a variable directly using ncread:

url = 'https://compute.earthmover.io/v1/services/dap2/earthmover-public/noaa-gfs-forecast/main/opendap';

% Inspect the dataset

ncdisp(url)

% Inspect dimensions and sizes of a specific variable

info = ncinfo(url, 'wind_u_100m');

disp({info.Dimensions.Name; info.Dimensions.Length})

% Read a subset using start and count parameters

% In this example a single initialiation time step, the full global domain, and all forecast steps

wind_u_100m = ncread(url, 'wind_u_100m', [1 1 1 1], [1140 721 209 1]);

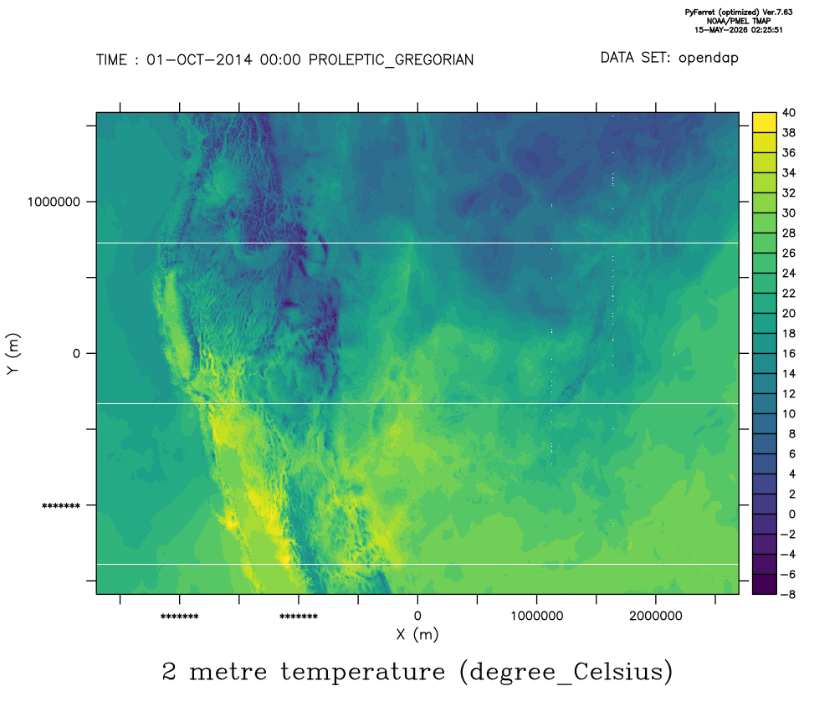

Ferret

Ferret is an interactive computer visualization and analysis environment designed to meet the needs of oceanographers and meteorologists analyzing large and complex gridded data sets.

Ferret works with data in Arraylake via the OPeNDAP!

Here's an example of a Ferret script to read a the HRRR analysis dataset.

use "https://compute.earthmover.io/v1/services/dap2/vandelay-industries/hrrr-analysis/main/opendap"

! Plot 2 m air temperature for the first hour in the archive (2014-10-01 00:00 UTC).

! HRRR uses a Lambert Conformal projection — the x and y axes are projection

! metres relative to the projection origin, not lat/lon.

shade/l=1 temperature_2m

! Save the plot to /work in the container (the host directory you mounted).

frame /format=png /file="hrrr_t2m.png"

exit

Output of Ferret command